What 100+ operators get wrong about running Claude as infrastructure

Two years on ChatGPT. Six months on Claude. Here is what actually changed, and the 12-step system behind it.

Save this and block 30 minutes this week to run the workbook.

Send it to one founder, GTM operator or RevOps lead whose team is prompting from scratch every day.

The free AI with Claude workbook is here:

The 90-second version

The problem: your AI output drifts every week because context lives in your head, not your system

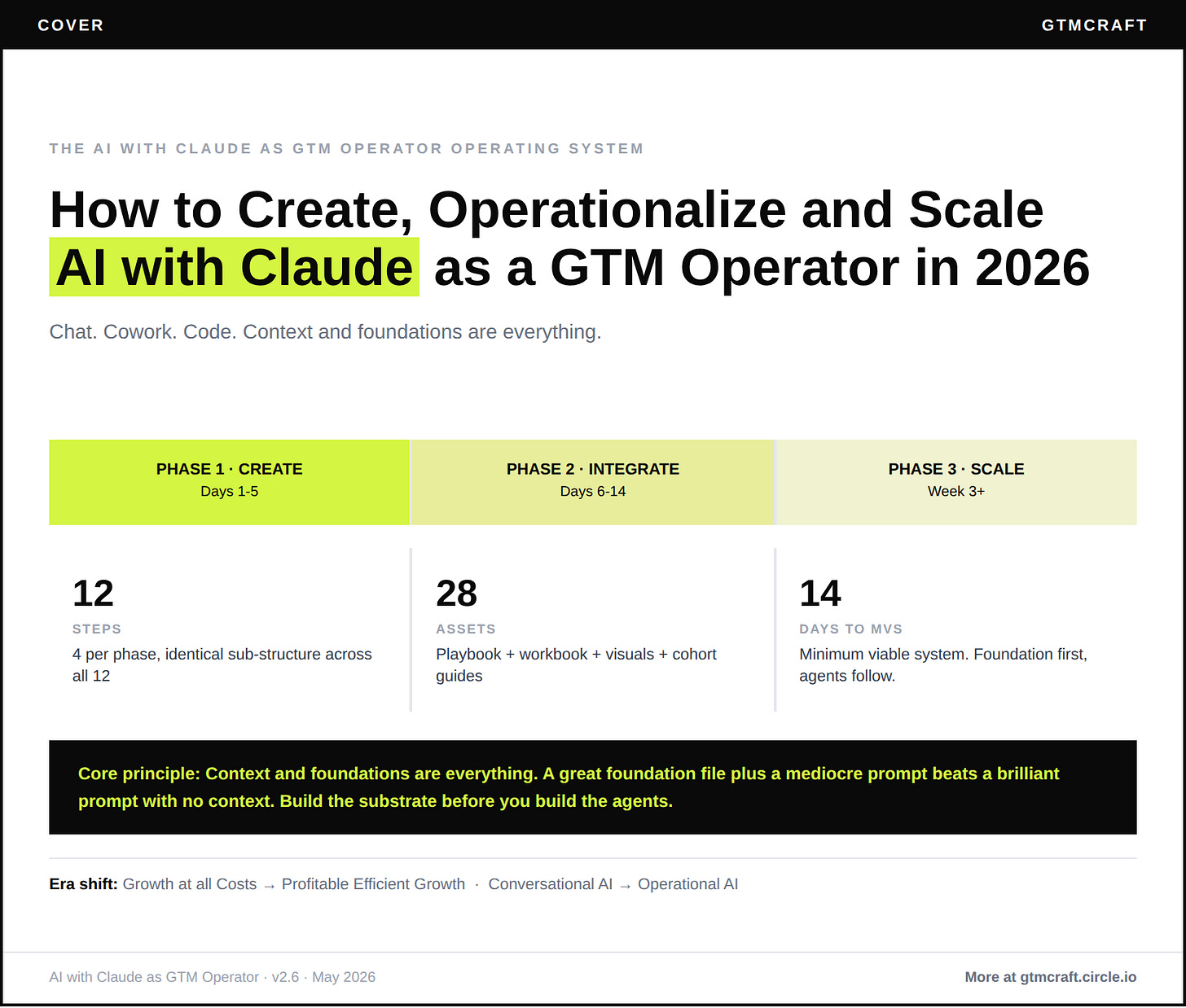

The system: the AI with Claude operating system, a 12-step playbook across three phases

The asset: [AI with Claude workbook, free download]

The action: complete your self-assessment before Wednesday’s edition drops

Read time: 4 min

What changed since last week

Last week was the GTMcraft launch. You received multiple updates on what this community is, why it exists, and where it is going. This week we return to the weekly rhythm: one GTM challenge, one playbook to help solve it. The first topic is the one powering everything you will see built inside GTMcraft: how to run Claude as a GTM operator.

I ran ChatGPT for 18 months and almost missed what Claude actually is

At the start of 2026, I switched from ChatGPT to Claude. I had been running ChatGPT for two years with carefully crafted prompts and getting decent output. Good enough that I did not feel the pull to change.

What changed my mind was not a benchmark. It was watching what Claude’s Projects, Skills, and MCP integrations made possible when wired together. Context that persists across sessions. Workflows that invoke automatically. Output that does not reset every time you open a new chat. ChatGPT is a powerful tool. Claude, when built as an operating system, is infrastructure.

The honest version of my journey: I spent most of 2025 treating AI like a sophisticated search engine. Better prompts, better output. That logic gets you to 80%. The last 20% — the part where hours come back and teammates can reproduce your output without asking you how — requires building the system around the model. Foundation files. CLAUDE.md. Persistent memory. Wired MCPs. Named Skills. Scheduled agents.

I started seeing the same gap across the Pavilion community. One person on a GTM team getting extraordinary output. Eight others opening fresh chats and wondering why it is not working for them. The difference is never the model. It is the system around it.

This week’s three-edition arc gives you that system.

The four failure patterns that keep most operators stuck

Prompt library as strategy.

You collected 80 prompts in Notion. You use three of them. The rest go stale because prompts without persistent context are inherently brittle. Foundation files written in Q1 run clean in Q4. Prompt libraries rot faster.One power user, nine spectators.

Your best seller or marketer gets 10x output from Claude. Nobody on the team can reproduce it. The workflows live in their head. When they leave, the output quality leaves with them.MCP theatre.

You connected Notion and Gmail to Claude in one excited afternoon. You never built a workflow that uses both. The connections go cold. The setup was compliance, not a system.Model chasing.

Every time Anthropic ships a new model, you spend two days re-testing your workflows. You are not making progress. You are burning hours on infrastructure churn. Changing your substrate every three months because a new benchmark dropped leaves you with a year of AI spend and no compounding output.

What the system looks like

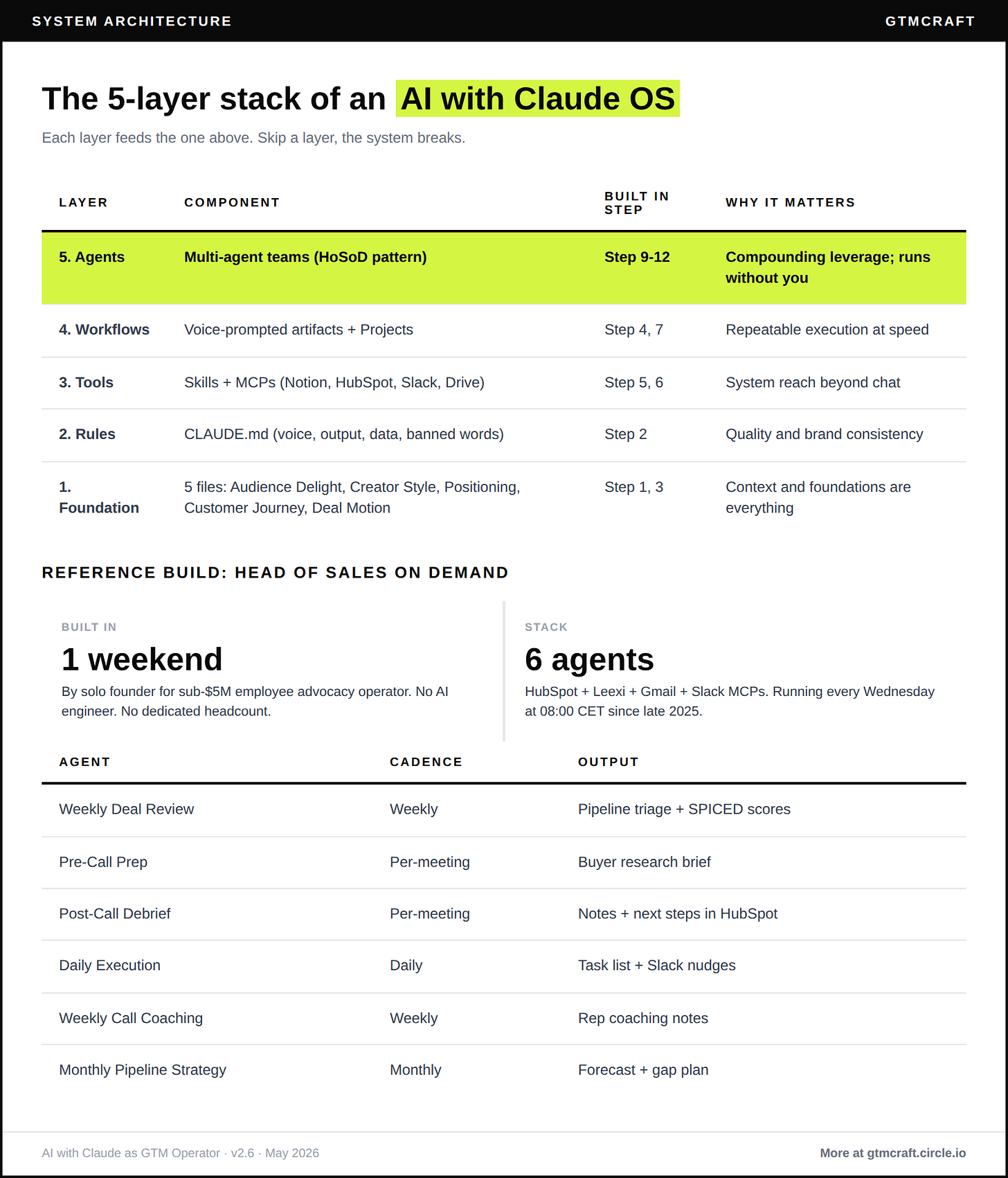

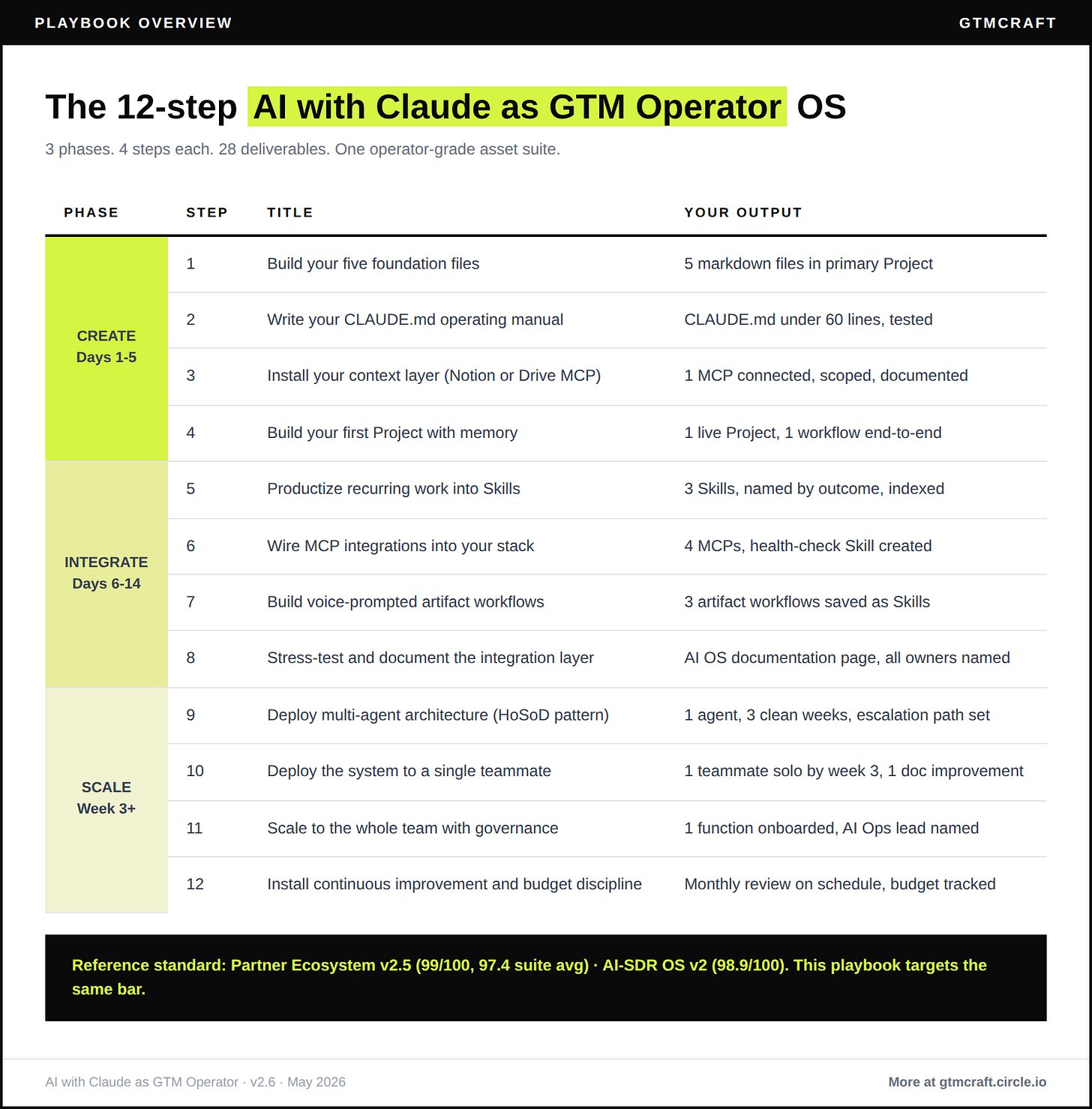

The AI with Claude operating system runs in 12 steps across three phases.

Phase 1 CREATE (days 1 to 5). You build five foundation files that define your audience, voice, positioning, buyer journey, and deal motion. You write a CLAUDE.md operating manual. You wire one MCP and run your first Project with memory. This phase feels like writing documents. The compound starts in week 4.

Phase 2 OPERATIONALISE (days 6 to 14). You productize your top three recurring workflows into Skills. You wire four MCPs. You build voice-prompted artifact workflows that ship docs, slides, and reports instead of chat replies. By the end of week 2, your AI is producing output while you review, not while you re-prompt.

Phase 3 SCALE (week 3 onward). You deploy scheduled agents that run while you sleep. You roll the system to one teammate, then your whole GTM function. You install governance so quality does not drift when you stop watching.

The output is a portable, version-controlled operating system that travels with you across Chat, Cowork, and Code.

The business case in concrete terms

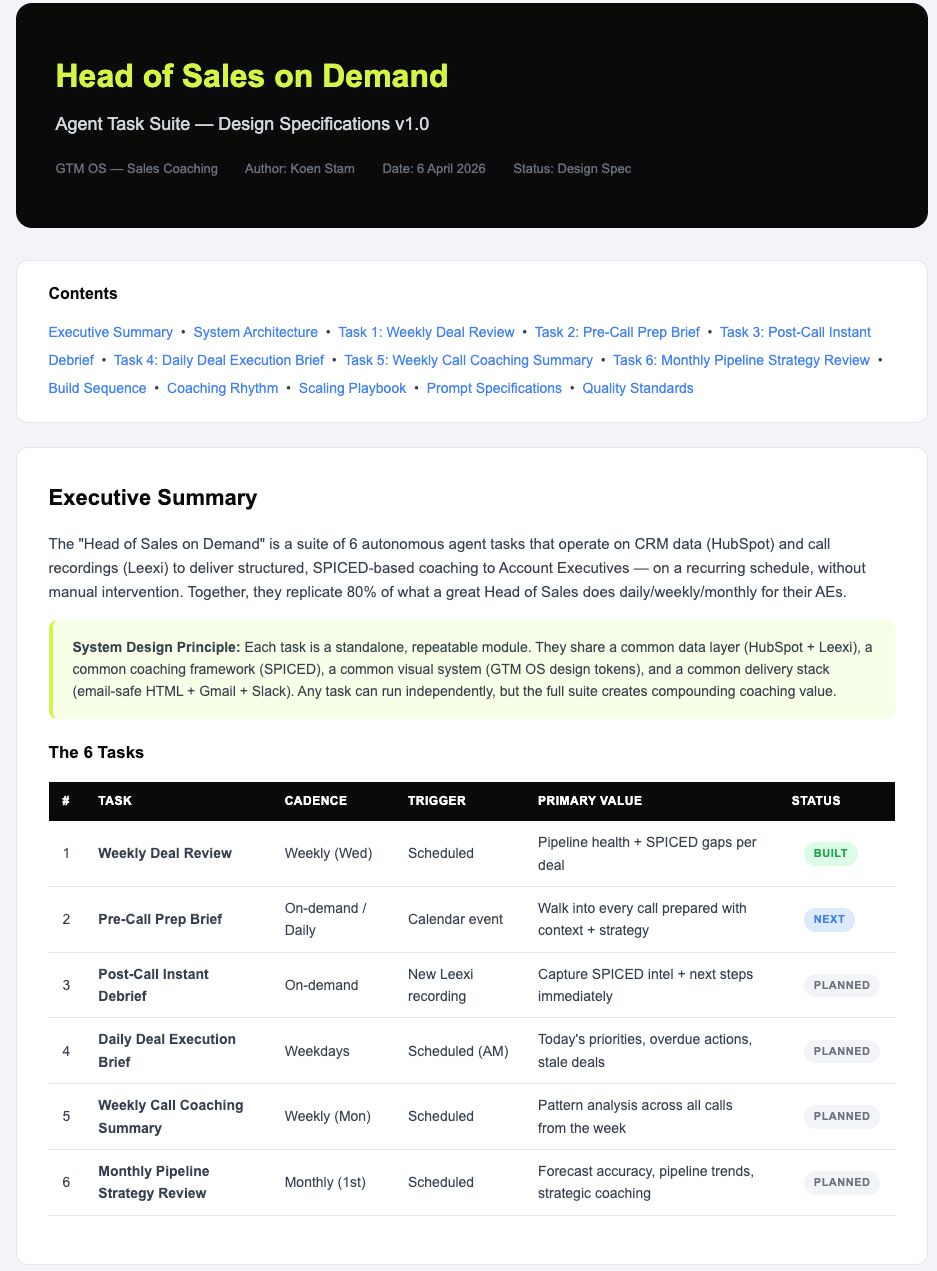

A sub-$5M employee advocacy operator I coached deployed a six-agent Head of Sales on Demand suite on HubSpot, Leexi, Gmail, and Slack over a single weekend. Six agents: Weekly Deal Review, Pre-Call Prep, Post-Call Debrief, Daily Execution, Weekly Call Coaching, Monthly Pipeline Strategy.

Their AE now walks into every call with SPICED-scored prep, a coach-reviewed next action, and a debrief that updates HubSpot without manual logging. Every Wednesday at 08:00 CET, the Weekly Deal Review runs itself. The ninety-minute review became a twelve-minute scan.

Six to eight hours per week returned on day one. Climbing to fifteen-plus by day 90 as workflows mature into background agents. No dedicated AI engineer.

Your entry point by role

Founder-CEO: start with Step 1 (foundation files) and Step 9 (Head of Sales on Demand deployment). Delegate Steps 5 to 8 to a champion.

VP Sales or RevOps: start with Step 4 (first Project with memory) and Step 9 (agent suite). Loop Steps 1 to 3 in parallel.

VP Marketing: start with Steps 1 to 3 and Step 6 (MCP integrations). Hand off to the AI-SDR playbook for outbound sequencing.

Fractional operator: start with Step 2 (CLAUDE.md) and Step 10 (deployment on client systems).

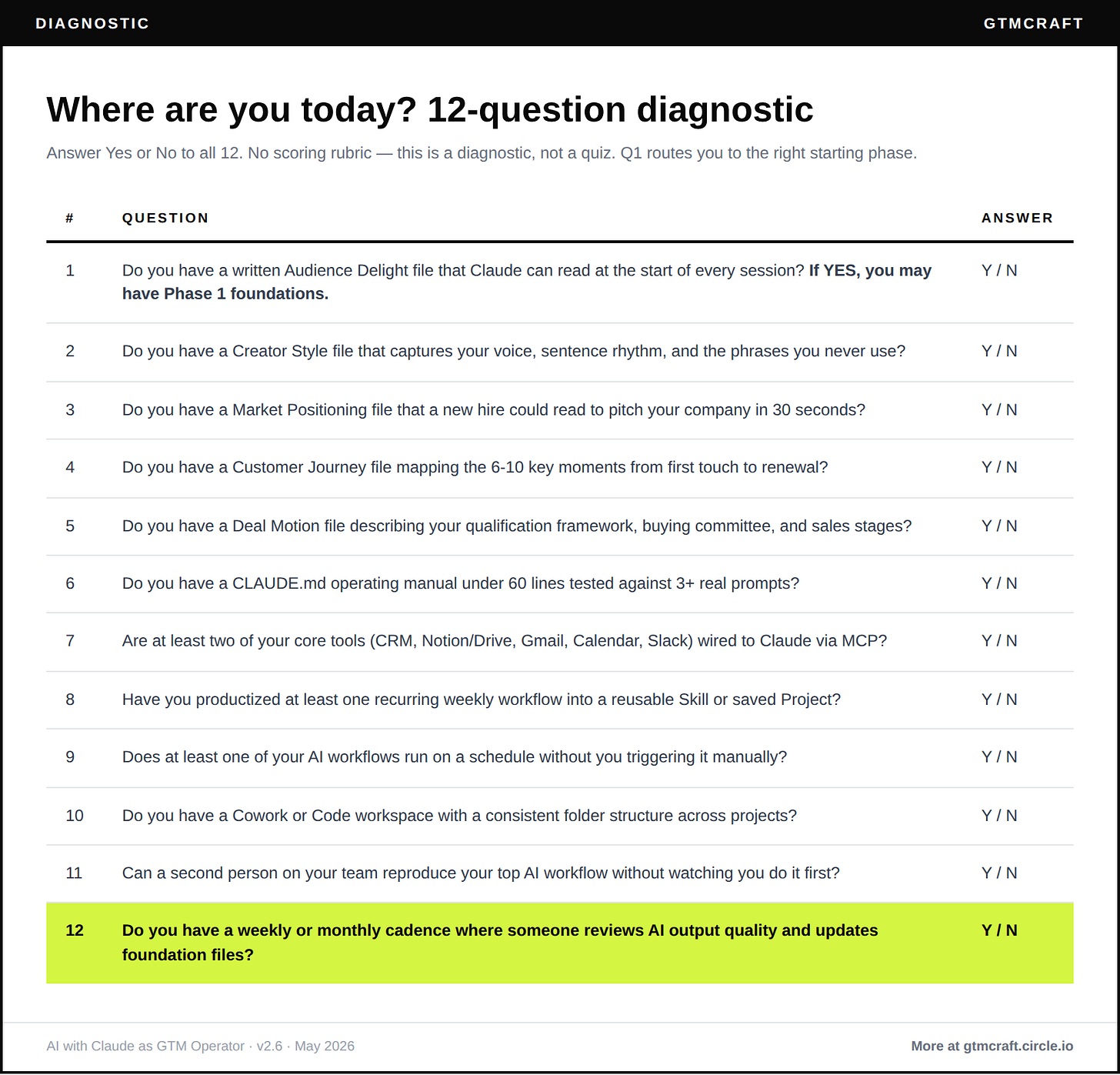

Your Claude Chat self-assessment prompt for AI operating model maturity

Copy this into a fresh Claude Chat session:

You are an AI operating model coach. I want to assess my current setup

as a GTM operator.

Ask me 12 questions, one at a time, across: foundation files, CLAUDE.md,

MCP integrations, productized Skills, voice-prompted artifact workflows,

scheduled agents, team rollout, and governance cadence.

After each answer: confirm what I have, flag what is missing, score it 1 to 5.

After all 12, tell me:

- My maturity level (Level 1 Prompting 12-24 / Level 2 Context 25-36 /

Level 3 Integration 37-48 / Level 4 Infrastructure 49-60)

- Which phase of the AI with Claude operating system to start from

- One action I can complete this week to move up one level

My company: [one sentence]

My role: [founder / VP Sales / VP Marketing / RevOps / fractional]

My team size: [GTM headcount]This takes ten to fifteen minutes. The output tells you exactly where to start on Wednesday.

What comes next

Wednesday covers Phase 1 in full: the five foundation files, CLAUDE.md, your first Project with memory, and why the context layer matters more than any model upgrade. Every step includes a real prompt you can copy and run that day.

Friday covers Phases 2 and 3: Skills, MCPs, voice-prompted artifact workflows, scheduled agents, team rollout, and governance. Plus a 4-prompt pack and the companion doc with the complete self-assessment, scoring bands, and cadence templates. All free.

A note on this playbook

This is version 1 of the AI with Claude GTM playbook. It updates at minimum quarterly, and more often for Claude specifically because the product surface moves fast. Cowork launched in March 2026. Managed Agents shipped in April. The next version will reflect what changed.

What will not change: the foundation layer. Five files. CLAUDE.md. One MCP. One Project. Get that right, and every model update compounds on a stable base.

Reply and tell me where your team is today. I read every reply.

See you inside GTMcraft,

Koen

Your (human) GTM Agent

Hi, it is Koen Stam and welcome to GTMcraft: The Future GTM Operator. This newsletter is built from 100,000+ GTM signals collected from 100+ operators and founders, combined with 13+ years of my own lessons and failures from the trenches. I write at the intersection of go-to-market practice and AI-powered systems for founders scaling 0 to 10M+ ARR.

100,000+ GTM relevant signals from LinkedIn, Newsletters and Podcasts indexed. 100+ playbooks structured. 3 GTM operator jobs across 3 GTM motions (SMB, MM, ENT). Same recipe. Now opening as a community.